The Copilot Question: Generic Convenience or Architectural Precision?

How business leaders should think about off the shelf copilots (chatgpt, microsoft copilot, Claude etc) vs. business specific AI that has the context of the business

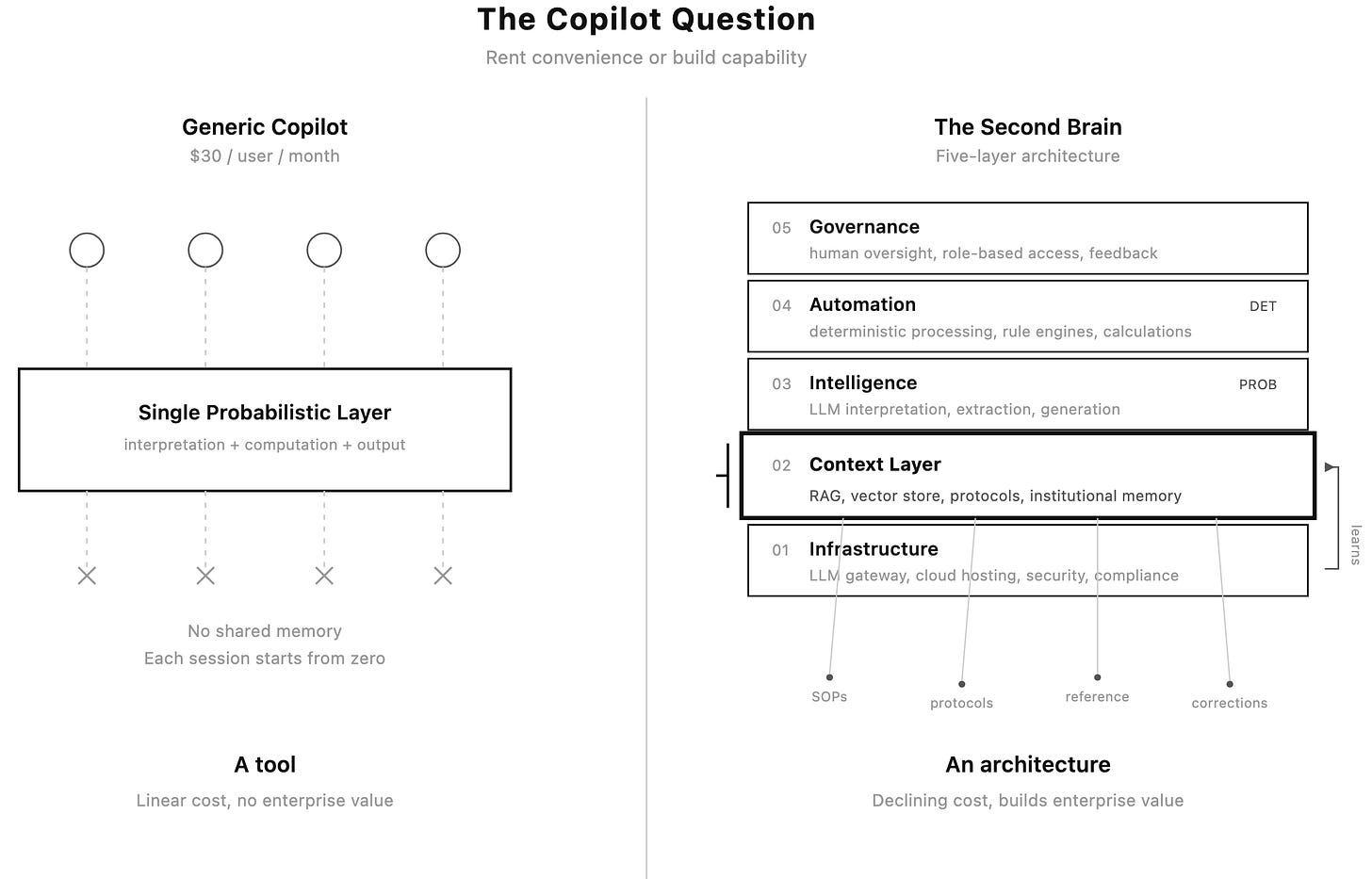

Every enterprise technology decision eventually resolves into a version of the same question: do you buy the standardized product or build something fitted to the business? The current wave of AI copilot deployments — led by Microsoft’s Copilot at $30 per user per month and a growing roster of competitors — has compressed this question into an urgent, high-stakes bet. The answer is less obvious than either camp tends to admit.

An accompanying PDF infographic is available at the end of this article.

What a Generic Copilot Actually Is

A generic AI copilot — Microsoft 365 Copilot being the most prominent example — is a horizontal productivity layer. It wraps a large language model into the applications employees already use: Word, Excel, Outlook, Teams. The value proposition is immediate: no infrastructure to build, no integration to design, no AI expertise required on staff. Plug it in, pay per seat, and let individual employees find their own productivity gains.

This is a real and substantive value proposition. It should not be dismissed. For organisations whose AI needs are genuinely general-purpose — drafting emails, summarising meetings, reformatting documents — a generic copilot delivers meaningful time savings with minimal deployment friction. The product improves with each model generation, and the vendor absorbs the entirety of the infrastructure, compliance, and maintenance burden.

The limitations, however, are structural rather than temporary. A generic copilot knows nothing about the organisation deploying it. It has no access to proprietary workflows, institutional knowledge, domain-specific terminology, or the particular quality standards that define how work should be done in a given business. Each user’s interaction is stateless and disconnected: when one employee uploads a document and receives a summary, that summary vanishes when the session ends. The next employee asking about the same subject starts from zero. There is no shared organisational memory, no compounding of capability, and no accumulation of enterprise-specific intelligence over time.

What a Business-Specific AI Architecture Looks Like

The alternative is not simply “a better chatbot.” It is a fundamentally different deployment philosophy — one that is better understood as building the organisation a second brain rather than handing each employee a general-purpose assistant.

The metaphor is precise. A human brain does not process every input through the same undifferentiated neural pathway. It routes sensory data through specialised regions, draws on long-term memory for context, applies learned heuristics for pattern recognition, and escalates to conscious deliberation when uncertainty is high. A well-designed enterprise AI architecture does the same thing: it separates interpretation from computation, grounds outputs in organisational context, and escalates to human judgment at defined confidence boundaries.

The five-layer enterprise AI architecture described in The Modern AI Construct provides a reference framework for how this works in practice. At the foundation sits the infrastructure layer — the LLM gateway, cloud hosting, and security scaffolding. Above it, the context layer ingests and organises the institution’s own data: its protocols, reference materials, terminology standards, and operational knowledge. The intelligence layer applies the language model for interpretation, extraction, and generation — but only for work suited to probabilistic systems. The automation layer handles deterministic processing: calculations, rule engines, compliance checks, and workflow orchestration. At the top, the governance layer enforces human oversight, role-based access, and continuous feedback loops that allow the system to learn from corrections over time.

This layered separation is what transforms a language model from a tool into an institutional capability — the organisation’s second brain. Rather than inserting a general-purpose language model into generic productivity software, a business-specific architecture places the language model behind a context layer — a structured repository of the organisation’s own data, protocols, terminology, and workflow logic. The context layer is the critical differentiator. It is what gives the system organisational memory.

Consider a healthcare practice where physicians currently use free AI tools to convert dictated clinical notes into formatted documentation. A generic copilot gives each physician a slightly better version of what they already have: a conversation with a language model that knows nothing about the practice’s EMR formatting requirements, approved clinical terminology, or documentation standards. A business-specific deployment ingests those standards into the context layer — the second brain’s long-term memory — so that every output conforms to the organisation’s actual requirements without manual correction. The system does not merely summarise — it summarises correctly, by the organisation’s own definition of correct. The physician is interacting not with a general-purpose model but with an institutional intelligence that understands how this particular organisation works.

This distinction extends into more consequential territory when the work involves computation. A common pattern in businesses adopting AI informally is the use of language models for arithmetic — uploading spreadsheets and asking the model to calculate averages, totals, or billing figures. This is a category error that the five-layer architecture is specifically designed to prevent. Language models are probabilistic systems: they predict the most likely next token, not the mathematically correct answer. They will produce confidently wrong arithmetic at unpredictable intervals. In the five-layer framework, this work is split across the intelligence and automation layers: the language model handles what it is good at (extracting structured fields from unstructured text, resolving ambiguity, classifying categories) and routes the extracted data into the automation layer’s deterministic systems (conventional code, SQL queries, rule engines) for the actual calculations. A generic copilot has no mechanism for this architectural separation. It processes everything through the same probabilistic layer — conflating interpretation and computation in a single pass that is structurally incapable of guaranteeing arithmetic accuracy.

A third dimension is knowledge accumulation — and it is here that the second brain metaphor earns its weight. A business-specific deployment backed by a retrieval-augmented generation (RAG) architecture and a vector store means that reference materials, operational procedures, and institutional knowledge become a shared, searchable, growing asset. When one employee researches a topic and the findings are ingested into the context layer, every subsequent query on that topic benefits. The organisation’s second brain develops a form of institutional memory that no individual employee possesses in full. Over months and years, this creates a compounding capability curve that a collection of disconnected copilot sessions cannot replicate. A generic copilot is stateless by design — each session starts from zero. The second brain retains, connects, and builds.

The Honest Case for the Generic Copilot

None of this means a generic copilot is the wrong choice. Its advantages are concrete and should be weighed honestly.

Speed of deployment. A generic copilot can be activated across an organisation in days. A business-specific architecture requires discovery, design, integration, and testing — weeks to months before the first user sees value. For organisations under competitive pressure to adopt AI immediately, this time gap is real.

Zero infrastructure burden. The vendor handles hosting, scaling, security patches, model updates, and compliance certifications. The organisation needs no AI engineering talent, no cloud infrastructure expertise, and no ongoing maintenance budget beyond the per-seat fee. For companies without technical depth, this is not a minor consideration — it is often the deciding factor.

Predictable cost structure. Per-seat licensing is easy to budget, easy to approve, and easy to cancel. There is no capital expenditure, no sunk cost in custom development, and no risk of an internal project failing or running over budget.

Continuous improvement without effort. When the underlying model improves — GPT-4 to GPT-4o to the next generation — every user benefits automatically. A business-specific deployment must be re-tested, re-validated, and potentially re-architected to take advantage of model improvements.

Broad applicability. A generic copilot serves every department equally. Marketing, finance, HR, legal, and operations all get the same tool. A business-specific architecture typically targets one or two high-value workflows first and expands incrementally.

The Honest Case for the Business-Specific Architecture

Output quality in domain-specific work. When the work requires adherence to specific formats, terminology, regulatory standards, or institutional protocols, a context-aware system produces materially better outputs. The difference between “generally useful” and “specifically correct” compounds across thousands of interactions.

Architectural separation of probabilistic and deterministic work. Any workflow that involves both interpretation and computation — clinical billing, financial reconciliation, compliance checking, insurance claims processing — benefits from an architecture that uses the right tool for each layer. A generic copilot cannot make this distinction.

Knowledge accumulation as an asset. A RAG-backed system that ingests and retrieves organisational knowledge creates a proprietary asset that appreciates over time — the second brain growing smarter with use. This matters especially for businesses contemplating a future transaction: a buyer conducting due diligence sees materially different value in a proprietary institutional intelligence system versus a collection of SaaS subscriptions. The second brain is an asset on the balance sheet in a way that copilot licences never will be.

Declining marginal cost. The infrastructure cost of a business-specific deployment is largely fixed — the LLM gateway, the RAG pipeline, the hosting environment, and the context layer do not scale linearly with users. At modest user counts, the per-user cost may exceed a copilot subscription. At scale, it drops well below it, because the marginal cost of each additional user approaches the inference cost alone.

Augmentation calibrated to role. Not every employee should interact with AI in the same way. A physician generating clinical documentation has fundamentally different quality requirements, review workflows, and error tolerances than an HR administrator drafting a policy memo. The governance layer of a five-layer architecture enforces role-appropriate workflows — human review at specific confidence boundaries, domain-specific guardrails, output validation against organisational standards. A generic copilot treats every user identically.

The Decision Framework

The choice is not binary — many organisations will deploy both. A generic copilot handles the broad, horizontal productivity layer (email, scheduling, document drafting) while a business-specific architecture addresses the high-value, domain-specific workflows where output quality, compliance, and knowledge accumulation matter most.

The relevant questions are not about technology preferences. They are about business structure.

First, how domain-specific is the work? Organisations whose primary value creation involves specialised knowledge, regulated workflows, or proprietary processes will extract disproportionately more value from a fitted architecture. Organisations whose work is primarily general-purpose communication and coordination will extract disproportionately more value from a generic copilot.

Second, what are the error costs? In workflows where a wrong output is merely inefficient — a poorly drafted email, a mediocre slide deck — generic copilots are adequate. In workflows where a wrong output has financial, legal, clinical, or regulatory consequences, the architectural separation between probabilistic interpretation and deterministic processing is not a luxury but a requirement.

Third, does the organisation intend to build AI into its enterprise value, or merely use it as a productivity tool? If AI is an operating expense — a line item that improves employee efficiency — a copilot subscription is the natural vehicle. If AI is a strategic asset — a system that accumulates institutional knowledge, reduces marginal costs over time, and increases the organisation’s value to a future acquirer or investor — then the deployment must be architected, not subscribed to.

Fourth, what is the realistic internal capacity for an architectural deployment? A business-specific AI system requires design, integration, and ongoing refinement. Organisations without access to competent implementation partners or internal technical talent will find that a poorly executed custom architecture delivers less value than a well-deployed generic copilot. Execution quality is not a secondary consideration — it is the primary one.

The copilot-versus-architecture question is, at its core, the same question enterprises have faced with every generation of enterprise technology: whether to rent convenience or build capability. Neither answer is universally correct. The mistake is treating the decision as a technology evaluation rather than a business strategy question. A generic copilot is a tool — useful, accessible, and disposable. A layered architecture built on the five-layer framework described in The Modern AI Construct is an institution’s second brain — a system that learns, retains, and compounds. The technology powering both will change. The strategic logic of what the organization is building, and for whom, will not.