The Headcount Trap: What AI Coding Tools Actually Change About Software Team Economics

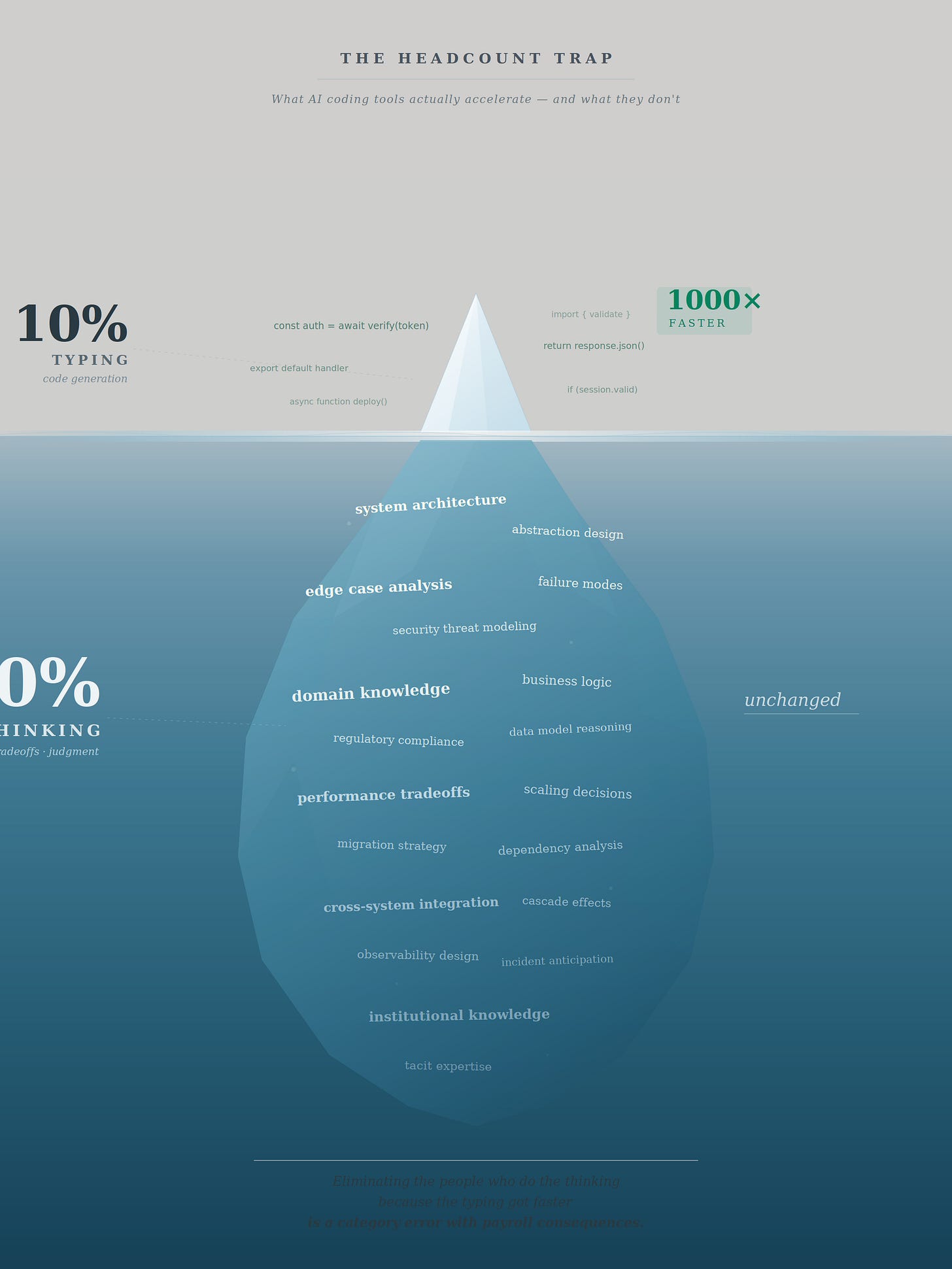

AI made code generation 1000× faster. The work that actually matters hasn’t changed much at all.

The most dangerous idea in enterprise software right now is not that AI coding tools don’t work. It is that they work well enough to make staffing decisions on instinct.

Every week, another breathless post reports that Claude Code or Cursor or Copilot enabled a single developer to “build an entire application in a weekend.” The implied conclusion is always the same: if one person with AI can do what five did before, four people are redundant. The arithmetic is seductive. It is also incomplete — in ways that will cost firms years of compounding advantage if they act on it without understanding what the tools actually change about the economics of building software.

This is not an argument against headcount adjustment. Some firms are overstaffed. Some roles will become redundant. Pretending otherwise would be as irresponsible as pretending AI tools eliminate the need for developers entirely. The argument is about sequencing, evidence, and the difference between a strategic decision and a panicked one.

What the tools actually deliver

AI coding assistants genuinely accelerate certain categories of software development work. They generate boilerplate, scaffold features, write tests, navigate unfamiliar codebases, and handle repetitive implementation tasks at speeds no human can match. These are real capabilities producing real productivity gains, and firms that ignore them will fall behind.

But the gains are unevenly distributed across task types, and the gap between raw AI output and production-grade software remains significant. Functional code that runs and handles the happy path is not the same as production software with proper error handling, security hardening, edge case coverage, observability, and maintainability. The distance between those two things is where most engineering labor lives, and it is the kind of labor AI tools handle least reliably.

Early observations from Anthropic’s internal usage suggested that unguided sessions succeeded roughly a third of the time, with ten to twenty percent abandoned entirely. Those figures are likely outdated — the tools have improved substantially through multiple release cycles — but the structural point they illustrate has not changed. No current AI coding tool has eliminated the need for human supervision. The failure rate may have declined. It has not reached zero, and the cost of undetected failures in production systems scales non-linearly. A bug that a human reviewer would catch in five minutes can cost weeks of incident response, customer trust erosion, and reputational damage if it reaches production unreviewed.

The firms extracting the most value from these tools have converged on a common set of practices: well-maintained documentation files (CLAUDE.md in the Claude Code ecosystem) that encode architectural decisions, coding conventions, and domain vocabulary; plan-before-execute workflows that separate problem exploration from code generation; committed test suites that prevent the AI from silently rewriting verification criteria; and fresh-context review sessions where code is evaluated by an AI instance that did not write it. Every one of these practices requires experienced developers to design, maintain, and enforce. The AI accelerates execution. Humans still own the architecture of correctness.

The 90/10 problem

There is an older piece of wisdom in software engineering that predates AI tools by decades: programming is ninety percent thinking and ten percent typing. The ratio has always been approximate, but the underlying observation is precise. The hard part of building software is not producing the text that a compiler or interpreter consumes. It is deciding what that text should say — understanding the problem domain, identifying edge cases, choosing the right abstraction, reasoning about how a change in one module will cascade through a system, weighing tradeoffs between performance and maintainability, and anticipating failure modes that will only surface under production load at scale.

AI coding tools have made the ten percent a thousand times faster. They have not materially changed the ninety percent.

This is the single most important structural fact about the current generation of AI coding assistants, and the one that the viral productivity narrative most consistently obscures. When someone reports that Claude Code “wrote an entire authentication module in three minutes,” what actually happened is that a human spent time thinking about what the authentication module needed to do — which identity providers to support, how tokens should be stored and rotated, what the session lifecycle looks like, how failures should surface to the user — and then the AI generated the implementation in three minutes instead of the three hours it would have taken to type manually. The thinking time did not compress. The typing time did.

This distinction has direct implications for headcount decisions. If you believe that programming is mostly typing, then a tool that types a thousand times faster makes most programmers redundant. If you understand that programming is mostly thinking, then the same tool changes what developers spend their time on — less time typing, more time thinking, reviewing, and verifying — without necessarily reducing the number of people needed to do the thinking.

The confusion arises because the thinking work is invisible in the output. A commit log shows code that was written. It does not show the two hours of reasoning about why that code takes the shape it does, the three alternative approaches that were considered and rejected, or the edge cases that were identified and handled before they became production incidents. AI tools generate visible output at extraordinary speed, which creates the impression that the entire process has been accelerated by the same factor. It has not. The bottleneck has moved from typing to thinking, and thinking does not parallelise or automate the way typing does.

This does not mean the thinking will never be delegated. Future models may close the gap. But decisions made today on the assumption that the gap is already closed will produce teams that lack the cognitive capacity to do the work that the tools cannot yet do — and that is where the real engineering value lives.

The real strategic choice

Firms adopting AI coding tools face a genuine decision, but it is not “use AI” versus “don’t.” It is between two deployment philosophies — and the responsible answer, for most firms, is a carefully sequenced combination of both.

The first philosophy treats AI as a cost reduction lever. Developers are expensive. If AI makes each developer more productive, fewer are needed for the same output. Reduce headcount, capture the margin, report better numbers. The logic of operational efficiency.

The second treats AI as a throughput multiplier. The same developers, equipped with AI tools, ship more features, serve more clients, explore more product directions, and iterate faster. Hold headcount constant, capture the speed advantage, and compound it into market position. The logic of strategic leverage.

Presenting these as mutually exclusive — as much of the current discourse does — is a false binary. The question is not which strategy to pursue. It is which to pursue first, and how to sequence the transition so that the decisions made early do not foreclose the options available later.

Why speed first is usually right

The case for prioritising throughput over headcount reduction rests on three dynamics that hold across most — though not all — firm contexts.

The first is compounding. A team that ships features in two weeks instead of six does not merely save four weeks of payroll per cycle. It captures market feedback three times faster, iterates toward product-market fit sooner, and reaches revenue milestones earlier. Each cycle feeds the next. This is among the most well-established dynamics in technology strategy — the foundation of lean methodology, the OODA loop, and decades of competitive research. The dynamic can fail. Companies can ship fast and learn nothing, generating features nobody wants while accumulating technical debt. Speed is necessary but not sufficient. It requires a functioning feedback loop between velocity and product insight. But cutting capacity makes speed impossible, which forecloses the option entirely.

The second is revenue linkage. For any company where developer capacity is functionally equivalent to revenue capacity — which describes most technology services firms, agencies, and consultancies — removing developers removes the ability to generate revenue. A consulting firm that cuts its engineering team from twenty to twelve has not become more efficient. It has become smaller. The margin percentage may improve, but the margin dollars shrink, and the firm’s ability to pursue new engagements contracts proportionally. This is doubly true for firms building platforms or productized offerings, where sustained development throughput is needed to construct the asset that will eventually reduce the marginal developer needed per dollar of revenue.

The third is valuation signaling. Private equity buyers and strategic acquirers price technology-enabled services businesses along a spectrum. Firms that respond to AI tools by cutting developers signal optimisation within the services model — valued at services multiples. Firms that respond by shipping faster and building reusable platform capabilities signal transition toward the software model — valued at meaningfully higher multiples. A legitimate objection to this framing is that buyers ultimately value metrics, not signals: revenue growth, gross margin trajectory, customer retention, recurring revenue percentage. True. But the strategic choices a firm makes determine which metrics improve, and the throughput-first approach tends to improve the metrics that drive higher valuations.

When headcount reduction is appropriate — and when it is premature

The strongest objection to a blanket “speed first” prescription is that it ignores firms for which the advice is unaffordable. A company under acute margin pressure, with stagnant revenue and limited financial runway, cannot fund a multi-quarter investment phase before rationalising. Telling that firm to maintain headcount and invest in documentation infrastructure is not strategic counsel. It is a prescription for running out of cash.

This objection is valid, and any honest framework must accommodate it. The answer is not that such firms should avoid headcount adjustment. It is that they should make those adjustments with precision rather than panic.

Three conditions distinguish strategic headcount reduction from reactive cuts.

The first condition is diagnostic clarity. Before removing any role, the firm must understand which tasks AI tools can reliably absorb and which they cannot. This requires actual measurement — not assumptions based on vendor marketing or weekend prototype demonstrations, but instrumented data from the firm’s own codebase, with its own complexity, conventions, and quality standards. A role that consists primarily of writing boilerplate CRUD endpoints is a strong candidate for AI substitution. A role that consists primarily of architectural decision-making, cross-team coordination, and production incident response is not. Most roles contain a mix of both, and the ratio varies by project, client, and codebase. Cutting without diagnostic clarity means guessing which roles are redundant, and guessing wrong is expensive to reverse.

The second condition is infrastructure readiness. Making AI tools work effectively at team scale requires investment that cannot be skipped: documentation that gives the AI operational context, workflow patterns that separate planning from execution, CI/CD pipelines that verify AI-generated output against the same quality standards as human-written code, and Git discipline that isolates AI changes for review. A large proportion of mid-market development teams — the exact population most likely to make impulsive headcount decisions — operate with incomplete documentation, inconsistent test coverage, and informal code review. These are practices any well-run team should already have, and the fact that many teams lack them does not make the investment trivial. It makes it necessary, and it must precede the cuts it is intended to support. Reducing headcount before building this infrastructure means the remaining developers never achieve the productivity levels that justified the reduction.

The third condition is honest denominator analysis. When the article’s critics ask “How many architects, reviewers, and gatekeepers does a team actually need once AI handles execution?”, they are asking the right question. The honest answer is that nobody knows yet with precision, because the tools are too new, the workflows are still being designed, and the failure modes of AI-supervised development at scale are still being discovered. But “we don’t know yet” is not the same as “the number hasn’t changed.” It almost certainly has. A team of ten developers who previously wrote code and reviewed each other’s work probably does not need ten reviewers once AI handles a significant share of the code generation. It might need six. It might need four. The correct number will become clear empirically, over time, as firms instrument their AI-augmented workflows and measure quality outcomes, defect rates, and incident frequency. The responsible approach is to let the data reveal the answer rather than guess it in advance and discover the guess was wrong after institutional knowledge has walked out the door.

The overstaffing question

One scenario the speed-first framework handles poorly is the firm that is genuinely overstaffed before AI enters the picture. Many mid-market services firms carry bench time, maintain teams sized for peak historical demand rather than current workload, and employ developers on internal projects with questionable return. For these firms, AI tools do not create redundancy. They reveal it.

This is a legitimate and common situation, but it requires careful separation from the AI adoption question. If a firm has fifteen developers and only needs eleven based on current and projected workload — independent of any AI capability — then the headcount adjustment is a management decision that should have been made earlier. Conflating it with AI adoption muddies the analysis and tempts leadership into attributing structural overcapacity to technological disruption, which produces the wrong lessons for future planning.

The diagnostic question is straightforward: would this role be redundant even if AI coding tools did not exist? If yes, the adjustment is an overdue management correction. If no — if the role is only redundant because AI can now perform tasks the developer previously handled — then the three conditions above apply. The distinction matters because the two types of adjustment carry different risks, different timelines, and different implications for the remaining team.

The role evolution nobody has staffed for

Whether a firm prioritizes speed, cuts, or both, one change is unavoidable: the developer’s job is different now. The shift is from writing code to specifying intent, reviewing plans, and verifying output — or, to frame it in terms of the 90/10 split, the job has shed most of its typing component and become almost entirely a thinking job. Senior engineers become more valuable as architecture owners and review gatekeepers — the people who can determine whether an AI-generated plan is correct before execution begins. Junior engineers need stronger code-reading and evaluation skills rather than raw implementation speed. The entire team needs what might be called AI supervision fluency: the ability to recognise when the tool is on the right track and when it is confidently heading toward an expensive dead end.

This is not a cosmetic relabelling. It is a genuine skill shift with hiring, training, and compensation implications. Firms that cut developers without understanding which competencies they are losing — and which they need to acquire — risk optimising for a workforce profile that no longer matches the work. The developer who was mediocre at writing code but exceptional at architectural reasoning and code review may be more valuable in an AI-augmented team than the developer who was fast at implementation but poor at evaluation. Most performance management systems are not designed to identify or reward this distinction, which means firms making headcount decisions based on historical performance data may be cutting exactly the wrong people.

The sequencing that preserves optionality

For firms with the financial runway to choose their approach, the following sequence minimises irreversible error.

Phase one is infrastructure and measurement. Build the documentation and workflow foundations. Equip the existing team with AI tools. Measure throughput changes over two to three quarters — not lines of code, but features shipped, defect rates, client deliverables completed, and incident frequency. This phase costs time and attention but preserves all future options.

Phase two is acceleration. With the infrastructure in place and productivity data in hand, use the gains to take on more work: more client engagements, more product features, more experimental initiatives. This is the phase where speed compounds into market position, and where the firm builds the evidence base for which roles are genuinely capacity-constrained and which have slack.

Phase three is rationalisation, informed by data. The productivity measurements from phases one and two reveal which roles AI tools have made redundant, which have changed, and which remain essential. Headcount adjustments made at this stage are surgical rather than speculative — grounded in the firm’s own experience rather than vendor claims or competitor behaviour.

For firms without that runway — those under immediate financial pressure — the sequence compresses but the logic holds. Conduct the diagnostic work in weeks rather than quarters. Identify roles where AI substitution is most clearly supported by the firm’s specific context. Make targeted reductions while simultaneously building the infrastructure the remaining team needs. Accept that the compressed timeline increases the risk of cutting the wrong roles, and preserve rehiring optionality where possible.

What is actually irresponsible

The viral narrative that AI coding tools can replace developers is not wrong because the tools are weak. They are not weak. It is irresponsible because it treats a complex, context-dependent, high-stakes organisational decision as though it were a simple arithmetic problem. If one developer plus AI equals three developers, then two developers are redundant. This reasoning ignores the infrastructure required to make the equation hold, the difference between prototype output and production quality, the compounding value of speed versus the one-time value of cost cuts, the diagnostic work needed to identify which roles are actually substitutable, the irreversibility of knowledge loss when experienced developers leave, and — most fundamentally — the fact that a tool which makes the ten percent of programming that involves typing a thousand times faster has not touched the ninety percent that involves thinking. Eliminating the people who do the thinking because the typing got faster is not an efficiency gain. It is a category error with payroll consequences.

Equally irresponsible is the opposite claim — that AI changes nothing about team structure and every current role will persist indefinitely. It will not. Roles will change. Some will be eliminated. The number of people needed to produce a given quantum of software output is declining, and pretending otherwise serves no one.

The responsible position sits between these extremes and insists on three things: that decisions be made on evidence rather than anecdote, that sequencing be deliberate rather than reactive, and that the humans whose livelihoods are affected be treated as participants in a transition rather than line items in a cost reduction exercise.

The question was never “Can AI do it faster?” It was always “Faster toward what, and at what cost to whom?” The firms that take that question seriously will navigate this transition successfully. The ones that reduce it to a headcount spreadsheet will not.