The Token Economy: What a $100,000 Employee Really Costs in the Age of AI

2026 Week 5: The economics of replacing knowledge workers with AI agents are compelling — but only if you account for the costs that most proponents conveniently omit.

Every knowledge worker in every office in the world is, at a fundamental level, a token-processing machine. They consume information — emails, documents, spreadsheets, meeting transcripts — and they produce it: reports, analyses, recommendations, decisions rendered in language. The atomic unit of this cognitive labour has always been invisible, buried inside salary bands and benefits packages and overhead allocations that obscure the true unit economics of thinking for a living.

Artificial intelligence has made that unit visible. The token — roughly three-quarters of a word, or four characters — is now the metered output of both human cognition and machine inference. For the first time in economic history, we can place human and artificial intelligence on the same balance sheet, denominated in the same currency, and ask a straightforward question: what does a token of cognitive work actually cost?

The answer is more nuanced, more interesting, and more strategically consequential than the breathless commentary from either AI evangelists or AI skeptics would suggest.

For this analysis I deliberately set aside what might be called the human ingenuity factor — the capacity for original insight, creative leaps, political navigation, ethical judgment under ambiguity, and the kind of lateral thinking that produces breakthroughs rather than competent output. These are real and, for now, largely irreplaceable capabilities in my mind. Excluding them is not an assertion that they do not matter; it is a modeling choice that allows us to isolate the economic comparison on the substantial portion of knowledge work that is routine, procedural, and pattern-based — the portion where AI agents are already functionally capable. For most knowledge workers, that portion is larger than they would like to admit. The ingenuity factor deserves its own treatment, but including it here would obscure the token economics that are the subject of this analysis, and those economics are consequential enough on their own terms to warrant a clear-eyed examination.

Decomposing the Human Token Machine

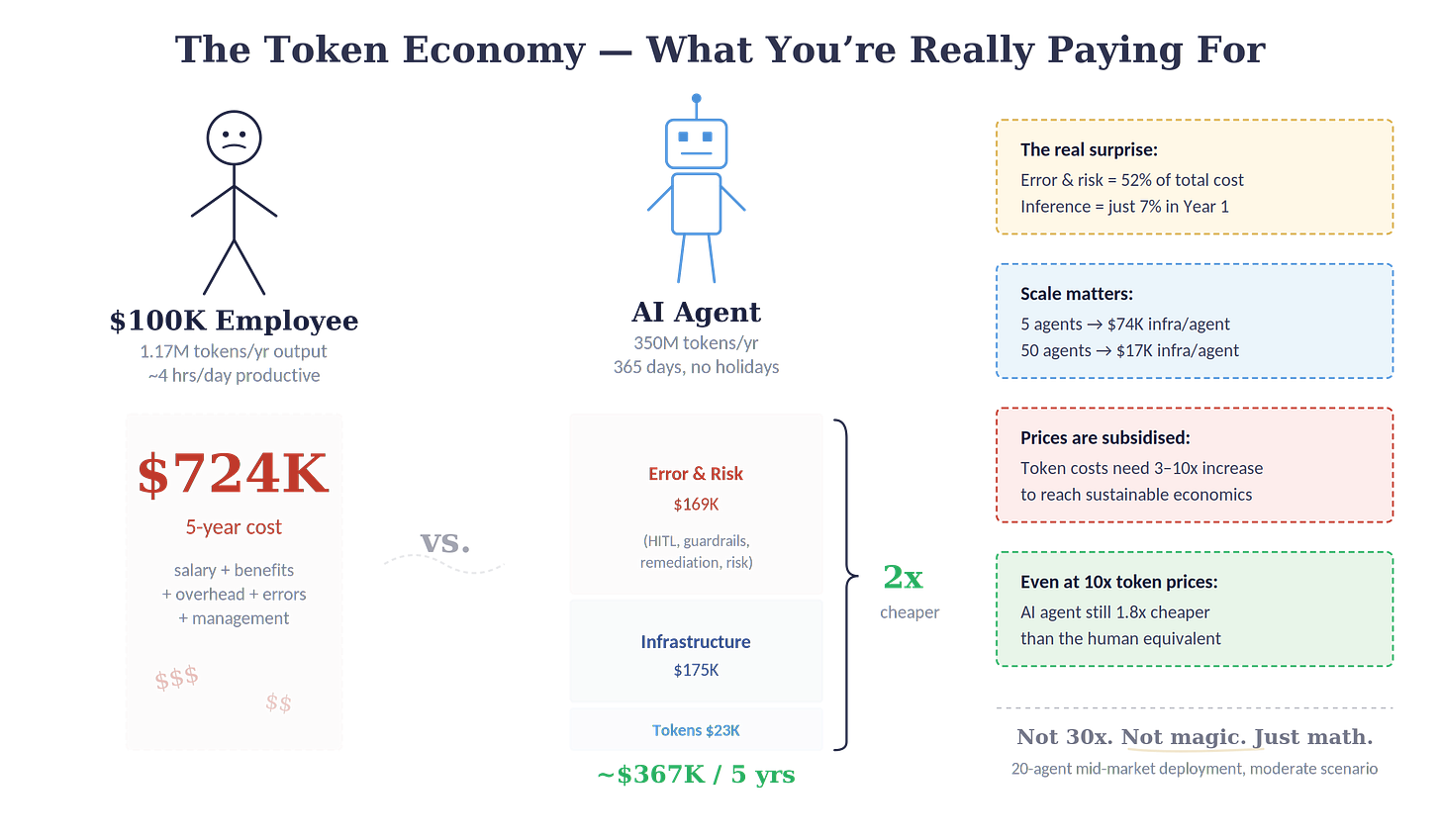

Consider a knowledge worker earning $100,000 per year. Add benefits, payroll taxes, office space, equipment, management overhead, and HR administration — the standard loading factor runs 35–50% above base — and the fully burdened annual cost lands at roughly $135,000.

What does this person produce? Research on workplace productivity suggests the average knowledge worker generates approximately 3,500 words per day across all channels: emails, documents, messages, presentations. Over 250 working days, that yields about 875,000 words, or 1.17 million tokens of written output annually. But output is only half the throughput equation. The same worker consumes vastly more information than they produce — reading, reviewing, analysing, discussing. A reasonable estimate of total cognitive throughput, input and output combined, runs 7–10 million tokens per year.

Those 7–10 million tokens cost the employer $135,000. That implies a cost of roughly $13.50–$19.30 per million tokens for human cognitive labour.

But this calculation flatters the human worker considerably. Workforce research consistently shows that knowledge workers spend only 2–4 hours per day on genuinely productive deep work. A Zapier survey found employees average 5.8 hours of meaningful work against 3.8 hours of busywork in a 9.6-hour day. Adjust for productive output only and the effective cost per useful token rises to $25–$40 per million tokens.

Hold that number. We will need it.

The AI Agent’s Appetite

Replacing that same knowledge worker with an AI agent requires a fundamentally different token profile. An agent does not type at 40 words per minute and then stare at Slack for twenty minutes. It processes at machine speed, but it also consumes tokens in ways a human does not: system prompts loaded with every request, context windows stuffed with conversation history, agentic reasoning loops where the model calls tools, reviews results, and iterates before producing a final output.

A realistic estimate: a single substantive task — responding to an email thread, drafting a report section, triaging a support ticket — consumes 10,000–20,000 tokens when you account for the full agentic loop. At 40–80 tasks per day, running 365 days per year (AI agents do not take holidays), an agent consumes roughly 350 million tokens annually. Using a 60/40 input-to-output split: 210 million input tokens and 140 million output tokens.

At March 2026 API pricing for Claude Sonnet 4.6 — $3.00 per million input tokens, $15.00 per million output — that is $2,730 per year.

Two thousand seven hundred and thirty dollars. Against $135,000.

This number should provoke deep scepticism. It is too good to be true — because it is.

The Uber Parallel

The AI inference market in 2026 bears a structural resemblance to ride-hailing in 2014 that borders on eerie. OpenAI spent $8.67 billion on inference in the first nine months of 2025 — nearly double its revenue. Anthropic reportedly burns 70 cents of every dollar earned. These companies are selling tokens below the marginal cost of production, funded by the largest concentration of venture capital in technology history — SoftBank, Microsoft, Sequoia, Google, and Amazon collectively writing checks that assume market share today converts to pricing power tomorrow.

The logic is identical to Uber’s early playbook: subsidise heavily, capture the market, build switching costs, then figure out how to make money. Developers embed APIs, enterprises build workflows around specific models, users form habits and preferences — all of this represents future lock-in. The subsidy is not generosity; it is customer acquisition cost amortised across hundreds of billions of tokens.

Industry analysts estimate current API pricing may need to increase 3–10x to reach sustainable unit economics. Dario Amodei, Anthropic’s CEO, warned at the December 2025 DealBook Summit that “there are some players who are YOLO” — a reference not to AI scepticism, but to the timing risk of companies betting correctly on AI’s impact but incorrectly on when the economics will work. The math does not support five or more well-capitalised foundation model companies operating indefinitely at a loss. Consolidation is arithmetic, not speculation.

The Uber precedent is instructive in its specifics. Uber’s early riders in San Francisco enjoyed rides at 40–60% below taxi rates. Then the subsidies tapered. Prices rose 40–100% in most markets over three years. The service remained cheaper than taxis for many use cases, but the economic calculus changed materially — and the businesses built on the assumption of permanently subsidised pricing were forced to adapt or die. The same trajectory awaits AI inference, with one crucial difference: unlike ride-hailing, where the underlying cost structure (driver wages, fuel, vehicle depreciation) was relatively fixed, AI inference benefits from a genuine technology deflation curve. Hardware improves, models become more efficient, distillation reduces computational requirements. The net result is likely a price increase from today’s artificially low floor, stabilising at a level that is meaningfully higher than current rates but still dramatically below the cost of human labour.

Even under an aggressive scenario — a 10x increase in token costs over five years with no efficiency gains — the annual inference bill for an AI agent rises from $2,730 to $27,300. Significant, but still a fraction of the human cost. Inference pricing, it turns out, is not where the real economic story lies.

The Costs Nobody Talks About

The token price commands disproportionate attention in industry discourse because it is the one number on the invoice. But it is, by a wide margin, the least consequential variable in the total cost equation. Three additional cost layers transform the economics from a fantasy to a strategic calculation.

The infrastructure layer. An AI agent does not materialise from an API key. It requires an orchestration platform, a vector database for company-specific knowledge, monitoring and observability tools, an API gateway for model routing, integration middleware connecting to enterprise systems, and cloud compute to run it all. For a mid-market company deploying 20 agents, this tooling stack runs approximately $120,000 per year, or $6,000 per agent.

More consequentially, the agents require people. A minimum viable AI operations team — an ML engineer, a solutions architect, and a half-time DevOps engineer — runs $410,000 per year. Add Year 1 buildout costs for systems integration ($200,000–$400,000 amortised over five years), ongoing maintenance ($60,000–$100,000 per year), security and compliance ($50,000 per year), and training and change management ($40,000–$60,000 in Year 1), and the total infrastructure bill lands at approximately $38,500 per agent in Year 1, settling to $34,000–$36,000 in steady state.

Infrastructure — not inference — is the real cost of AI deployment. In Year 1, inference represents just 7% of the total per-agent cost. The AI operations team alone accounts for more than half the infrastructure bill.

The error and risk layer. This is the cost that most AI economics analyses omit entirely, and it is the one that most dramatically reshapes the business case.

AI agents are probabilistic systems. They do not execute deterministic logic; they generate statistically likely outputs that are usually correct and occasionally spectacularly wrong. The industry shorthand for this is “hallucination,” but the term understates the operational reality. In agentic workflows — where agents reason in multi-step chains, call tools, interpret results, and build each subsequent action on prior outputs — errors compound. An agent that fabricates a non-existent API call, misinterprets retrieved data, or confabulates a client’s stated requirements does not just produce a wrong answer. It produces a wrong answer that looks right, delivered with the calm authority that makes AI outputs so seductively trustworthy.

The average hallucination rate across frontier models on general knowledge tasks remains around 9.2%, though well-architected production systems with retrieval-augmented generation have pushed this below 3%. Forrester Research estimates each enterprise employee costs roughly $14,200 per year in hallucination-related mitigation efforts. Microsoft’s 2025 data found knowledge workers spend 4.3 hours per week — over 10% of their working time — verifying AI outputs.

This verification burden manifests as four distinct cost categories.

First, human-in-the-loop supervision: dedicated QA reviewers who sample and validate agent outputs, exception-handling staff who deal with cases the AI got wrong, and the ambient cognitive overhead imposed on adjacent workers who must second-guess outputs they did not produce. For a 20-agent deployment, this runs approximately $23,000 per agent per year.

Second, guardrail infrastructure: hallucination detection tools, content filtering and policy enforcement systems, automated testing suites, and prompt drift monitoring. These purpose-built systems sit on top of the general infrastructure stack and add roughly $4,500 per agent per year.

Third, direct error remediation: the cost of fixing mistakes that escape the HITL review and reach customers, partners, or decision-makers. At a 3% production error rate with 80% catch rate, this runs approximately $6,750 per agent per year for a general knowledge-work use case — with dramatically higher figures in regulated industries.

Fourth, liability and risk premium: AI-specific insurance, legal review of outputs in regulated contexts, and the expected-value cost of tail risks — the single catastrophic error that causes regulatory action or client loss. A reasonable mid-market estimate: $8,000 per agent per year.

Total error and risk cost: roughly $42,250 per agent per year. This single layer exceeds the combined inference cost and is nearly as large as the entire infrastructure layer.

The Honest Comparison

Aggregating all three cost layers — inference, infrastructure, and error/risk — produces the fully loaded cost of an AI agent:

Inference: roughly $4,700 per agent per year (five-year average under moderate token-price escalation). Infrastructure: roughly $35,000 per agent per year. Error and risk: roughly $42,250 per agent per year.

Total: approximately $82,000 per agent per year, or $410,000 over five years.

Against the employee’s five-year cost of $724,000, the AI agent delivers a cost advantage of roughly 1.8x. At larger scale — 50 agents, where infrastructure costs per agent drop to $17,400 — the advantage widens to 2.2x.

These are real numbers, grounded in real costs, that deliver a real strategic advantage. They are also a universe away from the 30–50x advantage that inference-only analyses advertise. The gap between the headline number and the honest number is where fortunes will be made and lost.

The Asymmetry of Error

There is, however, a critical counterargument that most critiques of AI error economics fail to address: human error is not zero, and its costs are not tracked.

Human data entry without verification has an error rate as high as 4%. The average employee makes 118 workplace errors per year. Human error accounts for 80% of process failures across industries. The cost of bad data from human error in the United States alone is estimated at $3.1 trillion annually. A conservative estimate of annual error cost per human knowledge worker — rework, corrections, downstream impacts — runs $8,000–$20,000.

None of this appears in the $135,000 fully loaded employee cost. It is absorbed into the operating budget as “normal.” No enterprise runs systematic output verification on its human knowledge workers the way 76% of enterprises now verify AI outputs.

The error profiles are also structurally different. Human errors are inconsistent, idiosyncratic, and hard to detect systematically. They emerge from fatigue, distraction, emotional state, and individual knowledge gaps. AI errors are patterned and detectable. They cluster around specific failure modes — hallucination, context overflow, prompt ambiguity — that can be tested, monitored, and mitigated. The guardrail infrastructure is expensive, but it works in ways that have no human-error equivalent.

And the trajectories diverge. Hallucination rates on major benchmarks are declining approximately 3 percentage points annually. Production systems with properly implemented RAG achieve 71% reduction in hallucination rates. If the current improvement rate holds, top models could approach near-zero hallucination on structured tasks by 2027–2028. By Year 3–4 of a deployment, the error/risk layer should decline 30–40% from Year 1 levels as models improve, as the guardrail stack matures, and as the AI operations team accumulates institutional knowledge about which failure modes matter and which are benign.

Human error rates, by contrast, have not materially changed in decades. No training programme, no process improvement initiative, no quality management system has meaningfully reduced the base rate of human knowledge-worker errors. Fatigue still causes mistakes at 3 a.m. Distraction still causes mistakes after lunch. Overconfidence still causes experienced professionals to skip verification steps they have performed a thousand times before. AI error is an engineering problem on a declining curve. Human error is a biological constant. Over a five-year horizon, this asymmetry in trajectory matters more than the asymmetry in current rates.

What the Alternatives Actually Cost

Before committing to AI agents, the rational enterprise should price the alternatives.

Offshore BPO — the incumbent labour arbitrage — places a dedicated knowledge worker in the Philippines or India at $8–$15 per hour, or roughly $25,000 per year. This is the same price neighbourhood as the fully loaded AI agent. But offshore costs inflate 5–8% annually with rising wages, carry 30–50% rework overhead when poorly managed, and impose time-zone friction that AI does not. Attrition is chronic; replacing a departed offshore worker costs 1.5–2x annual salary.

Robotic process automation — UiPath, Automation Anywhere, Microsoft Power Automate — runs $1,200–$8,000 per bot per year for licensing, with complex enterprise deployments reaching $30,000–$80,000 per automated process. RPA automates procedures, not judgment. It handles the 20–30% of knowledge work that is structured and rule-bound. For the remaining 70–80% that requires natural-language reasoning, contextual understanding, and adaptive behaviour, RPA has nothing to offer.

Low-code automation (Zapier, Make) costs $5,000–$25,000 per year for a mid-market firm and automates plumbing between systems. Managed services run $150–$300 per user per month. Freelance platforms provide on-demand workers at $15–$75/hour but do not scale to continuous operations.

No single alternative cleanly replicates what AI agents do. The pre-AI toolkit is a patchwork — offshore for the cheap cognitive layer, RPA for the structured process layer, low-code for the connective layer — that costs $80,000–$120,000 per replaced knowledge worker with significant coverage gaps and management overhead. The AI agent collapses all four layers into a single platform at $82,000 per equivalent worker. It is not merely cheaper; it is architecturally simpler.

The Strategic Calculus

The fully loaded analysis yields five conclusions that should govern how mid-market enterprises approach AI deployment.

Scale is the critical lever. At 5 agents, infrastructure costs $74,000 per agent and the business case is marginal. At 20, it drops to $31,500–$38,500 and becomes strong. At 50, it falls to $17,400 and the fully loaded cost drops to roughly 20% of the human equivalent. Half-hearted pilots with two or three agents will not demonstrate ROI and will be cited as evidence that AI does not work. The correct approach is to identify a cluster of 15–25 roles with sufficient task similarity to share a common platform and deploy simultaneously.

Budget for the full error stack from day one. The error and risk layer is not an afterthought; it is 52% of the total cost in steady state. Enterprises that deploy AI agents without budgeting for HITL supervision, guardrail tooling, and remediation processes will discover these costs the hard way — typically when the first hallucination reaches a client deliverable.

The subsidy window is real and finite. Current token prices are artificially low. Building the AI platform and team now means cheap tokens for immediate ROI and a maturing infrastructure that will be ready when prices rise. Waiting for “stable” pricing means paying higher inference rates and Year 1 buildout costs simultaneously.

The AI operations team is the new strategic hire. The 2.5 FTEs running the AI platform generate more economic value per dollar of compensation than any other function in the organisation. An operations lead who optimises routing, improves accuracy, and reduces maintenance burden pays for the entire team in a single quarter.

The advantage is 1.8–2.2x, not 30x. This is still a transformative economic proposition — comparable to the gains that drove the first wave of offshore outsourcing — but it demands rigorous implementation, not casual deployment. The enterprises that win will be those that treat AI agents as an engineering discipline with full cost accounting, not as a magic cost-elimination tool that runs on API calls alone.

The $100,000 knowledge worker is not about to be replaced by $2,730 worth of tokens. They are about to be replaced by $82,000 worth of infrastructure, supervision, guardrails, and tokens — an honest number that still makes the case, but makes it on terms that survive contact with reality. The enterprises that internalise this distinction will build durable competitive advantages. The ones that chase the headline number will build fragile systems that shatter on first contact with a hallucinated client deliverable.

The token is a new unit of economic output that could solve the elusive ‘productivity’ measure, especially for knowledge work. Understanding what it truly costs — on both sides of the human-machine divide — is the foundational competence of the next decade of enterprise strategy.

This analysis is part of a broader framework on AI transformation economics for mid-market enterprises. The models and assumptions are detailed in the companion research document “The Token Economy: A First-Principles Analysis of AI Labour Substitution.”

Additional reading: Agents of Chaos