You Are Not Behind on AI. You Are Behind on Knowing Your Own Business.

The most consequential technology investment most companies will ever make is being guided by a map they drew from memory — and memory, it turns out, is a poor cartographer.

The AI anxiety that pervades mid-market companies in 2026 follows a predictable script. A CEO attends a conference. A board member forwards a McKinsey report. A competitor announces an “AI-powered” something. The result is a mandate — usually vague, always urgent — to “get moving on AI.” What follows is a procurement exercise dressed up as a transformation strategy: vendor demos, proof-of-concept pilots, and a budget line that nobody can tie to an operational outcome.

The assumption underpinning all of this activity is that the company knows what it does. That it understands, with reasonable precision, how work moves through its organisation, where value is created, where time is wasted, where decisions are made by policy and where they are made by habit, which processes are load-bearing and which are vestigial. This assumption is almost always wrong.

The real deficit is not in AI capability. It is in operational self-knowledge.

The Illusion of Understanding

Every business has an official version of how it operates. It lives in process documentation written during the last ERP implementation, in org charts that reflect reporting lines but not decision flows, in SOPs that describe how work should happen rather than how it does happen. This official version is what gets presented to consultants, auditors, and now — fatally — to AI vendors designing automation workflows.

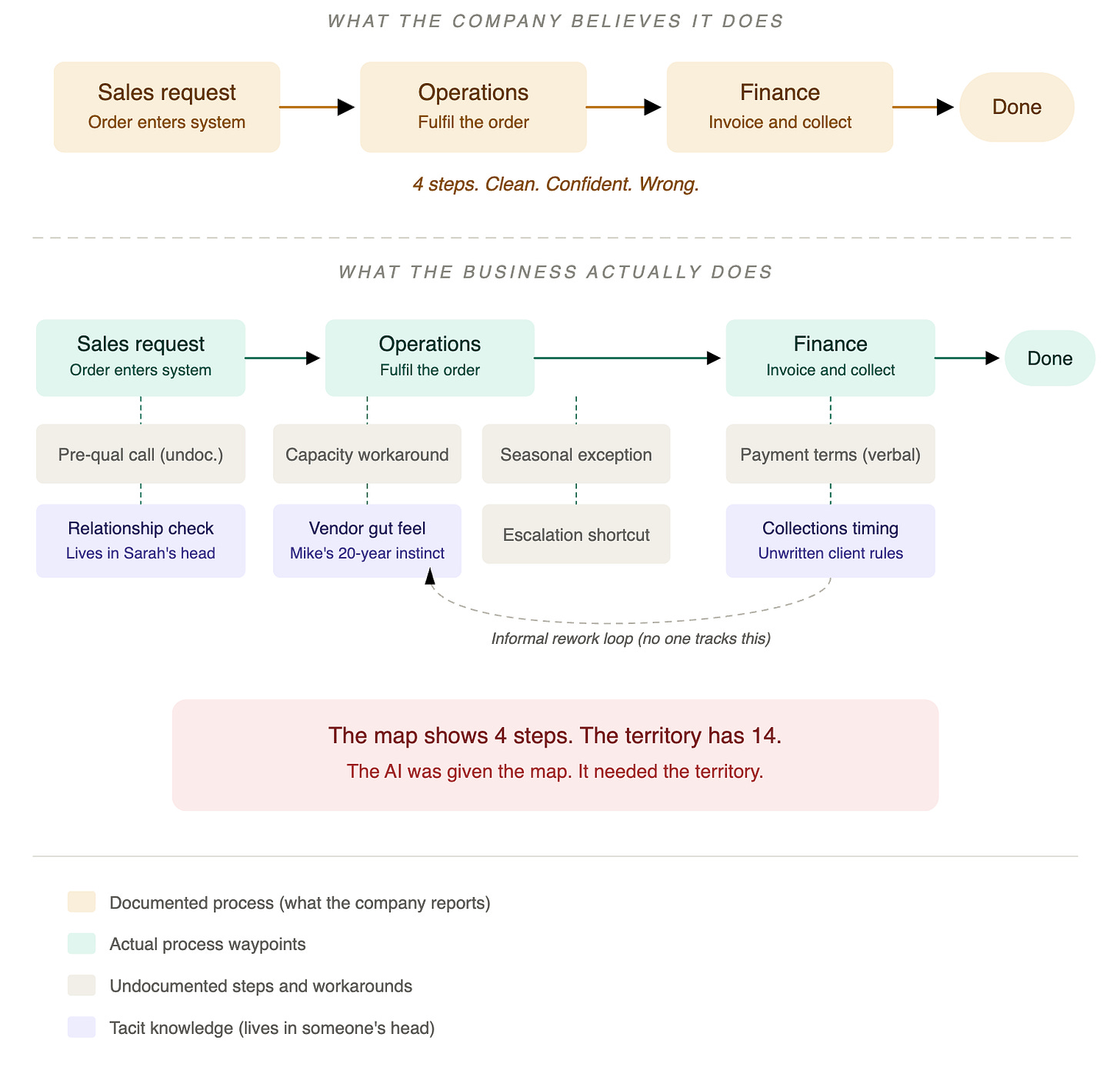

The actual operation of the business bears a complicated relationship to these documents. A shipping workflow might officially consist of eight steps from sales request to dispatch. The real workflow involves fourteen steps, three of which exist because of a quality-control bottleneck introduced in 2019 that nobody documented, and two of which involve a senior supply chain manager making judgment calls based on relationships with specific warehouse staff. The official process is a skeleton. The actual process is the skeleton plus twenty years of scar tissue, workarounds, tribal knowledge, and learned behaviour that collectively determine whether the business functions or seizes.

This gap between the official and the actual is not a failure of documentation. It is a structural feature of how organisations evolve. Businesses adapt continuously to new constraints, personnel changes, client demands, and operational surprises. These adaptations are rational and often effective. But they accumulate outside formal systems. They live in the heads of experienced operators, in the undocumented logic of Excel macros, in the email chains that constitute the real approval process, and in the relationships that determine whether a vendor delivers on time or delivers when they get around to it.

In a pre-AI world, this gap was survivable. Humans are remarkably good at navigating ambiguity. The experienced operator who “just knows” that the Chicago warehouse runs slow in February, that Client X always exaggerates urgency, that the compliance team needs three business days despite the policy saying five — this person compensates for every gap in the documented process. The organisation works not because its systems are complete, but because its people fill in everything the systems leave out.

AI does not fill in. AI executes on what it is given. And what it is given, in most organisations, is the official version — the skeleton without the scar tissue.

The Expensive Consequence

In The Token Economy, I built a detailed cost model comparing the fully loaded expense of a knowledge worker ($135,000 per year) against the equivalent AI agent deployment ($82,000 at a 20-agent mid-market scale). The economics are compelling on the spreadsheet. But the spreadsheet assumes that the AI agent has access to everything the human employee knew — not just the documented procedures, but the contextual intelligence that made those procedures actually work.

In The Ingenuity Ledger, I identified the institutional knowledge gap as the most underpriced risk in the AI replacement thesis. The argument is worth restating in sharper terms here: institutional knowledge is not a sentimental concept. It is the operating system of the business. When a company replaces experienced employees with AI agents without first capturing the contextual knowledge those employees carry, it is not optimising. It is lobotomising. The AI agent will execute the documented process flawlessly. The documented process is incomplete. The outputs will be technically correct and operationally disastrous.

This is the scenario playing out across mid-market enterprises that rushed to deploy AI in 2024 and 2025. The vendor demo was persuasive. The pilot looked promising. The full deployment produced results that were subtly, persistently wrong — not in ways that triggered error alerts, but in ways that eroded client satisfaction, introduced process friction, and generated decisions that an experienced human would never have made. The AI agent that routes the high-value client through the standard escalation path because the CRM does not contain the note about her preference for direct CEO access. The automated procurement workflow that selects the lowest-cost vendor because the system does not encode the knowledge that this vendor’s on-time delivery rate collapses during peak season. The compliance agent that applies the published policy without accounting for the informal guidance that the regulator’s local office has been communicating verbally for three years.

Each of these failures traces to the same root cause: the company did not know its own business well enough to teach it to a machine.

Why Nobody Knows

The question is why this ignorance persists. Mid-market companies are not staffed by fools. Their leaders are experienced operators who have built and run businesses for decades. How can they not understand how their own organisation works?

Three structural factors explain the gap.

The first is survivorship of tacit knowledge. The most valuable operational intelligence in any organisation is the knowledge that experienced employees carry but never formalise. It accumulates through years of pattern recognition, relationship development, and repeated exposure to edge cases. This knowledge is genuinely difficult to externalise — not because the employees are hoarding it, but because much of it is pre-verbal. The warehouse manager who can tell from the sound of the conveyor belt that it needs maintenance does not have a rule she can write down. She has ten thousand hours of auditory pattern matching that her conscious mind has compressed into “something’s off.” The account manager who knows which client emails signal real urgency and which signal performative urgency did not learn this from a training manual. He learned it from three years of calibrating his responses to outcomes. This knowledge cannot be extracted by asking “tell me how you do your job.” The employee does not know how she does her job, any more than a professional tennis player can articulate the biomechanics of her backhand. She just does it.

The second factor is documentation decay. Even when processes are documented, the documentation degrades. The half-life of an accurate process document in a dynamic mid-market business is roughly six to twelve months. After that, the business has changed — a new vendor, a new compliance requirement, a new client demand, a team restructure — and the document has not. The effort required to keep documentation current is substantial and produces no visible output. It does not close a deal, ship a product, or satisfy a client. It is pure overhead, and in resource-constrained organisations, pure overhead loses to urgent priorities every time.

The third factor is the org chart fallacy. Organisations describe themselves in terms of structure — departments, roles, reporting lines. But the actual work of the business flows through processes, not structures. A single client engagement might traverse sales, legal, operations, finance, and customer success, with decision points at each boundary that are governed by informal norms rather than documented policies. The org chart tells you who reports to whom. It does not tell you who actually decides whether to extend payment terms to a struggling client, or how the operations team communicates capacity constraints to sales before they become delivery failures, or why the finance team processes invoices from one division in three days and from another in twelve. These cross-functional flows — the connective tissue of the business — are almost never documented because they do not belong to any single department and therefore nobody owns the documentation.

The Living Document Thesis

The solution is not a one-time documentation exercise. It is not a consulting engagement that produces a 200-page process manual and declares victory. That manual will be obsolete before the ink dries, and it will not capture the tacit knowledge that matters most.

The solution is an institutional practice — a discipline of continuous operational observation, documentation, and refinement that produces a living document: a persistently current, cross-functionally maintained record of how the business actually works.

This is not a new idea. Toyota’s production system, the most thoroughly documented operational methodology in business history, was built on exactly this principle: go to the gemba, observe the actual work, document what you see, identify the gaps between what should happen and what does happen, and close them. The innovation is not in the concept. It is in the application of this discipline to knowledge work, where the “gemba” is harder to visit because the work is invisible — it happens in email threads, Slack messages, decision meetings, and the space between a question and a judgment.

What does this living document contain? It is not a process map, though it may include them. It is not an SOP library, though it draws from them. It is, at its core, a structured and continuously updated record of four things.

How decisions actually get made. Not the approval matrix in the policy manual, but the real decision architecture. Who has de facto authority over pricing exceptions? What information does the operations lead actually use when she decides to expedite an order? When the documented escalation path says “notify the VP,” does the VP actually get involved, or does the senior manager resolve it and notify the VP after the fact? Decision architecture is the highest-value layer of operational self-knowledge because it determines where human judgment is load-bearing and where it is ceremonial — a distinction that becomes existential when you are deciding which decisions to hand to AI.

Where the process deviates from the documentation. Every deviation represents either a problem to fix or an adaptation to preserve. The shipping team that added three undocumented quality-control steps is not violating the process. It is compensating for a deficiency in the process — one that the documented version does not acknowledge. Mapping these deviations is not about enforcement. It is about understanding the real process well enough to automate it correctly.

What knowledge lives in people’s heads. This is the tacit knowledge challenge, and it requires a specific methodology: structured observation of experienced operators performing their work, followed by structured debriefing to surface the decision logic they apply unconsciously. The goal is not to extract every piece of tacit knowledge — some will resist externalisation regardless of effort. The goal is to capture the middle band: knowledge that is not currently documented but could be with deliberate effort. In The Ingenuity Ledger, I described this middle band as the target of the Context Layer in the Modern AI Construct’s five-layer architecture. The living document is the precursor to that Context Layer. You cannot build a Context Layer for your AI architecture if you do not first know what context exists.

How information flows across functional boundaries. The handoffs between departments are where most operational failures originate and where most tacit knowledge concentrates. The sales-to-operations handoff, the operations-to-finance handoff, the customer-success-to-product handoff — each of these boundaries has an official protocol and an actual practice, and the distance between the two is where the business either functions smoothly or fails silently.

The Living Document as Decision Infrastructure

The point of this exercise is not documentation for its own sake. The point is that the living document becomes the decision infrastructure for every significant investment the company makes — AI or otherwise.

When a company evaluates an AI deployment, the first question is not “which vendor?” or “what’s the ROI?” The first question is: “Do we understand the process we are trying to automate well enough to specify it to a machine?” If the answer is no — and for most mid-market companies, for most processes, the answer is no — then the AI investment is premature. Not wrong. Premature.

The Modern AI Construct’s five-layer architecture — Systems of Record, Context Layer, Agents, Orchestration, Systems of Engagement — makes this dependency explicit. The Context Layer sits between the raw data in your systems of record and the AI agents that act on it. It contains the embeddings, knowledge graphs, decision histories, and institutional memory that give AI agents the contextual intelligence to produce outputs that are not merely technically accurate but operationally appropriate. Most organisations skip this layer. They go directly from systems of record to agents — from raw data to AI action — and are surprised when the AI does things that no experienced employee would do. The Context Layer cannot be built from nothing. It is built from the systematic capture of exactly the operational knowledge described above. The living document is the raw material from which the Context Layer is constructed.

This reframes the AI readiness question entirely. The Thinkbridge AI Maturity Framework scores organisations from Level 1 (Ad Hoc) through Level 5 (Transformative). The 2026 evidence suggests that the majority of mid-market organisations sit at Level 1 or 2. The conventional interpretation is that these companies need to accelerate their AI adoption. The better interpretation is that they need to decelerate their AI procurement and accelerate their operational self-knowledge. A Level 1 organisation that thoroughly understands its own operations is better positioned for AI than a Level 3 organisation that does not.

The Knowledge Depreciation Problem

There is a clock running on this, and it is the knowledge depreciation clock I described in The Ingenuity Ledger. Every day that a business operates without capturing its institutional knowledge, that knowledge becomes harder to capture. Employees leave. Processes evolve. The gap between what is documented and what is real widens. The scar tissue thickens.

Worse, the AI hype cycle is actively accelerating this depreciation. Companies that deploy AI agents to replace experienced employees before capturing what those employees know are permanently destroying institutional knowledge. The knowledge does not migrate to the AI system. It simply vanishes. The AI agent does not know what it does not know. It continues to produce confident outputs based on an increasingly fictional model of the business. And the person who would have noticed the fiction — the experienced operator who spent fifteen years developing the contextual intelligence to spot when something was “off” — is gone.

The ingenuity paradox, restated: the value AI extracts from human institutional knowledge is a depreciating asset that requires ongoing human input to refresh. The living document is the mechanism by which that refresh occurs. Without it, every AI deployment is building on a foundation that erodes from the moment it is poured.

What This Actually Looks Like

The living document is not a project. It is a practice, and it requires three commitments.

The first is dedicated observation time. Someone — ideally a cross-functional team with operational credibility — must spend time watching how work actually happens. Not reading process documents. Not interviewing managers about how their teams operate. Watching. Sitting with the account manager as she triages her inbox. Walking the warehouse floor during the shift change. Attending the Monday pipeline review and noting who speaks, who defers, and what information drives the actual decisions. This is unglamorous, slow, and irreplaceable.

The second is structured capture. Observation without documentation is just tourism. The living document requires a consistent structure — decision logs, process deviation records, tacit knowledge interviews, cross-functional handoff maps — that makes the captured knowledge searchable, referable, and actionable. The format matters less than the discipline. A well-maintained Notion database is infinitely more valuable than a beautifully designed document that nobody updates.

The third is institutional authority. The living document must be referenced when decisions are made. When the executive team evaluates an AI vendor, the living document should be on the table. When operations proposes a workflow change, the living document should inform the impact assessment. When finance builds the business case for a technology investment, the living document should provide the operational reality that the spreadsheet cannot capture. If the document exists but is not used, it decays into another artifact that nobody maintains. If it is used — if it becomes the shared reference point for how the business actually works — it stays alive because the people who rely on it have a stake in its accuracy.

The Competitive Advantage Nobody Is Building

The irony of the AI era is that the companies best positioned to exploit it are not the ones with the most sophisticated technology. They are the ones with the most sophisticated understanding of their own operations. A company that has meticulously documented how it actually works — its real decision architecture, its real process flows, its real institutional knowledge — can deploy AI that is genuinely transformative. The Context Layer builds itself from the living document. The agents operate on accurate contextual intelligence. The knowledge depreciation clock slows because the refresh mechanism is already in place.

A company that has not done this work will buy the same AI tools, deploy the same models, and produce results that are subtly, persistently, expensively wrong.

The gap between these two outcomes is not a technology gap. It is a self-knowledge gap. And closing it requires no AI at all. It requires discipline, humility, and the willingness to look at your own business with the eyes of a stranger — to see what is actually there, rather than what the org chart and the process manual say should be there.

You are not behind on AI. You are behind on knowing your own business. The first step is to admit that. The second step is to start watching.

This is the third in a series on AI transformation economics. The first — The Token Economy — presents the fully loaded cost model for AI labour substitution. The second — The Ingenuity Ledger — identifies the blind spots in the replacement thesis. The architectural framework referenced here is detailed in The Modern AI Construct.